Project Virtualso

Projects | | Links:

Training platform I prototyped that blends AI, NLP, and expressive virtual agents to rehearse interviews and public speaking.

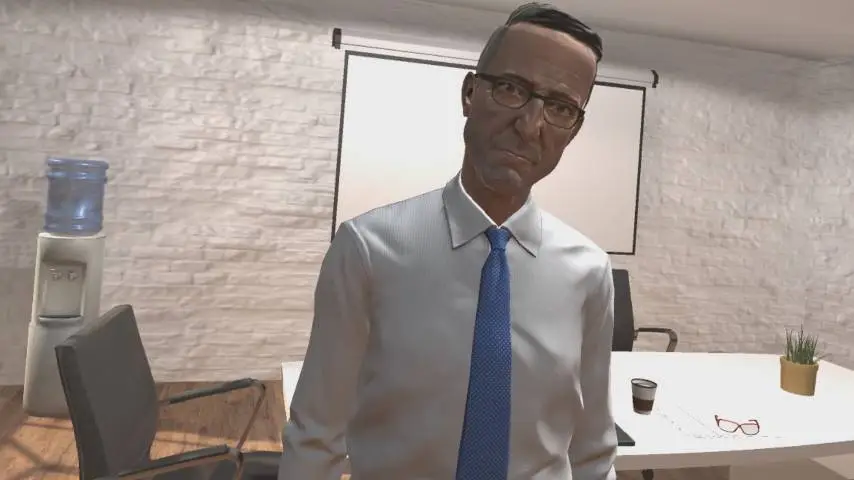

Project Virtualso blends AI, natural language processing, and VR to create conversational humanoid agents that respond with expressive facial animation and body language. I used it as a proving ground for combining my communication-arts research with Unity engineering to help people rehearse high-stakes conversations.

Inspiration Permalink

The project draws on my background in communication arts and VR to recreate nuanced human interactions. During the COVID-19 lockdowns, many people lost access to in-person mentorship, so I prototyped training simulations for job interviews and public speaking.

Virtual Interview Permalink

Users practice interviews with a conversational agent that listens, asks follow-ups, and mirrors emotion through facial expressions and gestures—powered by intent classification and dialogue logic.

Virtual Presentation Permalink

Presenters deliver slides in front of a virtual audience of agents that react in real time. The simulation helps people manage stage anxiety and was piloted with professionals across multiple companies, with positive qualitative feedback.

Under the Hood Permalink

- Real-time speech-to-text through Azure Cognitive Services feeds a lightweight NLP pipeline that classifies tone, confidence, and topic changes.

- Custom blendshape rig drives nuanced facial expressions and gaze behavior based on detected emotions, creating a feedback loop users can respond to.

- Scenario editor lets coaches script question banks, difficulty curves, and success criteria without editing code.

Results Permalink

- Shared prototypes with career-coaching nonprofits to explore how mixed reality role-play could shorten prep time for job seekers.

- Captured telemetry on pacing, filler-word frequency, and confidence markers that later influenced how I approach analytics in Microsoft Mesh onboarding flows.